LaGratta Helps City Courts in Texas Learn from Court User Satisfaction Survey with SurveyStance

There are two leading misconceptions of court user satisfaction surveys. First, fear of the feedback being bad. Secondly, there will be a low volume of feedback given. We spoke to LaGratta Consulting to learn about how they were able to bust these myths about feedback.

Tell us a little about LaGratta Consulting

Emily LaGratta, lawyer and founder of LaGratta Consulting in New York City, specializes in a niche space that helps courts and other justice agencies around the country better engage with and deliver fairness to the people they serve. One of her recent projects in 2020 included several city courts in Texas to collect and learn from their “customers” (or court users) about their experiences within the judicial system. This is a challenging objective given the serious nature of many court cases and court’s tense climate. The results were surprising: not only were court users willing to give courts feedback; the feedback was overwhelmingly positive.

What was the problem you were trying to solve?

This project aimed to tackle two problems. First, LaGratta needed to help show courts that customer feedback was valuable to their core operations and purposes. She knows from research she works with that giving voice to people contributes to their sense of fairness – and all courts should strive to be fair in the eyes of their users. Further, without feedback from court users, courts miss out on great ideas for improvement and an opportunity to boost public trust and confidence. Time was of the essence for a change.

The second problem to solve was showing courts that there are easy ways to collect and utilize feedback. Prior to this project, courts haven’t had many efficient or cost-effective means to capture feedback (think: paper surveys or large-scale research studies).

Why did you choose SurveyStance?

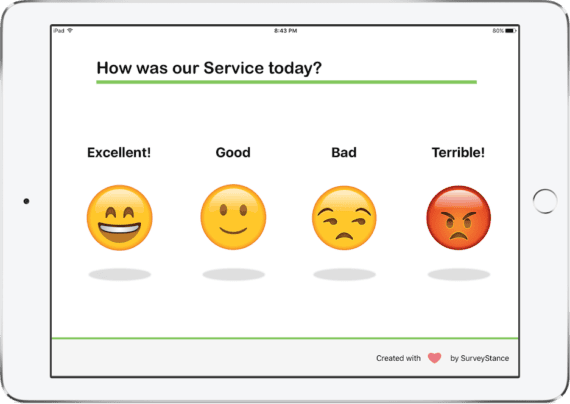

LaGratta researched several solutions but her criteria were very specific. She wanted a vendor that provided leased kiosk hardware with preinstalled software (to avoid purchasing logistics and the need for in-house IT capacity). Given the first-of-its-kind-nature of the project, she wasn’t sure what questions would be appropriate to ask so it was also important that the feedback questions were customizable. Smiley face responses alone were a deal breaker. LaGratta explained, “It didn’t feel right to expect court users to select ‘happy’ during court cases where very serious matters are at stake, so the thumbs up/down, open text field and custom inputs were great options to get the feedback we needed while being sensitive to the context.”

How did you use SurveyStance?

We equipped each court with one or two iPad kiosks, depending on the size of the court, as well as an email signature feedback link for court users interacting with the court remotely. LaGratta chose to take a building block approach by starting the project with one single question in all courts: “Did the court treat you fairly today?” In subsequent phases that lasted about two weeks, they layered in additional questions to identify and obtain deeper insight into court users’ experiences and improvement opportunities to address. Non-aggregate feedback data was only shared with each individual court, but by operating the project in a cohort, courts could see how their feedback measured up against aggregate feedback on the same questions for the whole group.

Busting Myths

What measurable outcomes can you share?

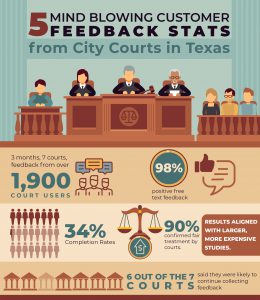

The biggest outcome is that with modest effort and cost, courts were able to gauge their customers’ experience and perceptions of fairness, even during a pandemic. While only one of the seven courts routinely collected user feedback before the project, six courts said after the pilot that they were likely to continue collecting feedback. Further, the feedback was not only very positive – approximately 90% of court users felt the courts treated them fairly – but the results aligned with those from larger, more expensive studies.

The other big outcome was showing that surveys can yield high completion rates in a courthouse setting:

Within a 3-month period, in the height of the pandemic, these seven courts collected feedback from over 1,900 court users.

On average, 14% of people that entered the courts provided feedback, and in some courts, the completion rates were as high as 34%. Covid-19 regulations actually made it easier than usual to calculate completion rates because courts had started tracking how many people came in and out of the building daily.

LaGratta continued to say that she was

Really impressed with how easily the courts adopted SurveyStance. The court system does not tend to be a very tech-literate sector, so it’s a testament to how user-friendly the setup is.

How did the solution help their team and overall state of their business?

The courts that collected feedback experienced a morale booster that they weren’t anticipating. “Out of all the free text comments received, nearly 98% were positive.” Some feedback even highlighted specific team members’ names, saying how wonderful they were and how that person helped them during their visit. Management was thrilled to share the positive feedback with staff, knowing it’s not something that court employees typically hear.

Many court leaders also used the feedback as a new way to convey their impact to city leadership. Four out of seven participating courts shared feedback data with city council or other external agencies.

In Conclusion

Would you recommend SurveyStance to your friends and professional network?

Yes, it’s been a great experience working with SurveyStance. We were trying something new in the court context, which made it all the more valuable to have such a quality product and excellent customer service.

LaGratta “highly recommends” SurveyStance to others that are looking to increase customer engagement and improve customer experience.

Court User Satisfaction Survey

Court User Satisfaction Survey App

SurveyStance Feedback Kiosk is used to capture real time customer or employee feedback.

One Click Embedded Surveys

SurveyStance Feedback Kiosk is used to capture real time customer or employee feedback.

Capture Customer Feedback

iPad Kiosk

iPad Based App

Use the iPad and Kiosk to place within your company to collect survey feedback from customers or employees.

Feedback Survey Loop

Feedback survey will loop to the first question after each completion.

Ask Follow Up Questions

After the first survey question is answered, ask follow up questions to get additional feedback.

Request Information on SurveyStance

Try It Free

Setup your account in less than 4 minutes.

😍 No Credit Card

😍 Full Access